Confidence Intervals

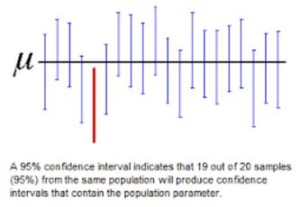

Finally, the research around standardized testing acknowledges that the scores obtained from a child’s performance may not be entirely perfect. To account for testing and human error, the scores described above are often offered alongside a Confidence Interval. A confidence interval expresses to what degree a score is “guaranteed” to be accurate. Since a test cannot claim 100% accuracy of any score, the confidence interval claims strong accuracy based on a range of scores.

For example, let’s say a child received a scaled score of 8, with a 95% confidence interval range of 7-9. This means that with high certainty, the child’s true score lies between 7 and 9, even if the received score of 8 is not 100% accurate. Here is another example: let’s say a child received a standard score of 110, with a 90% confidence interval range of 98-124. The score of 110 cannot be guaranteed, but it can be said with 90% certainty that the child’s score falls within the given range. So, if we could theoretically give this child the same test 100 times, and they did not learn anything from retaking the test, then 90% of the tests taken would produce a score between 98-124.

As a rule of thumb, 95% confidence intervals provide a more narrow range of scores (higher accuracy) than 90% confidence intervals (slightly wider range of scores to allow for more variation). Most psychological tests report at the 95% confidence interval and are quite reliable, often more so than medical tests. Next time you have your blood pressure taken at your physician’s office, ask them to check your left AND right arms; You are very likely to see some meaningful variation.

Visit the South County Child & Family Consultants website for more great articles!

Receive online class information and helpful tips from Dr. Randy Kulman's LearningWorks for Kids |